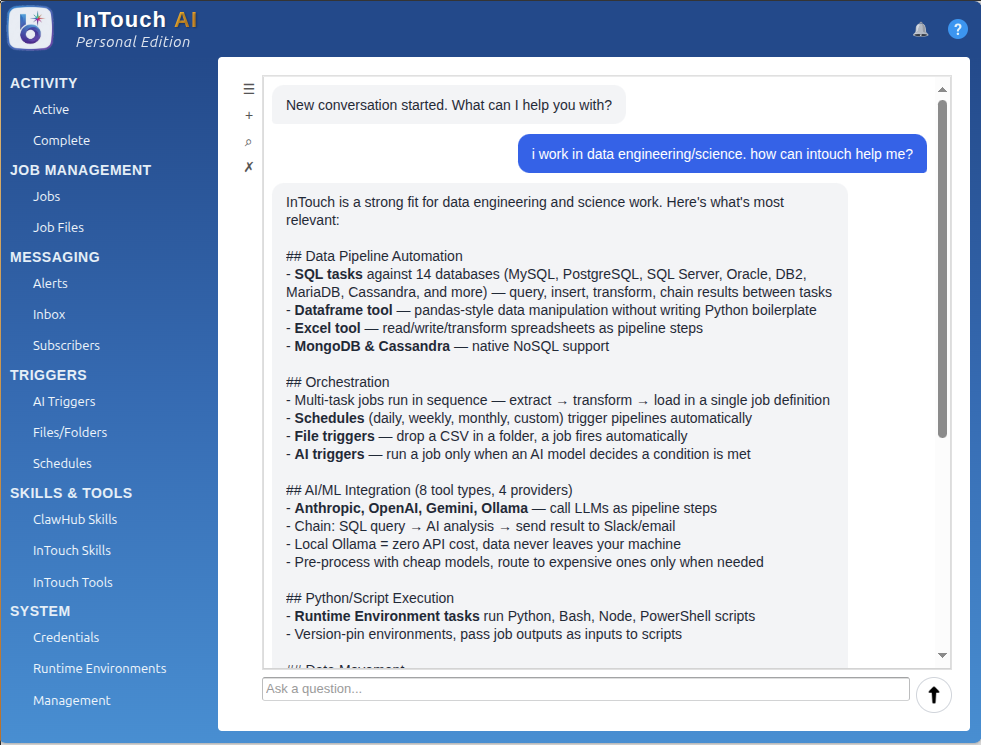

InTouch speaks to eight AI providers natively in every edition: Anthropic Claude, OpenAI, Mistral, Groq, DeepSeek, xAI Grok, Google Gemini, and Ollama (for local models). Hugging Face rounds out the set as a 9th, job-only provider. Each is a first-class tool. The assistant itself and every individual AI tool can target a different provider, swappable per tool by changing the tool name and credential reference.

If Anthropic refuses your domain — and it sometimes does: financial advice, legal synthesis, medical analysis — swap to Ollama with a local model. Zero restrictions, zero API cost, no data leaving the network. Works air-gapped. The job definition doesn't change; only the tool name and credential do.

This isn't an abstraction layer that papers over provider differences. Each provider's distinctive features (Claude's extended thinking, OpenAI's function calling variants, Gemini's multi-modal, Ollama's local model roster) is exposed directly. Swap when the use case changes. Don't accept a lowest-common-denominator wrapper.